Hybrid Deep Reinforcement Learning for Radio Tracer Localisation in Robotic-assisted Radioguided Surgery

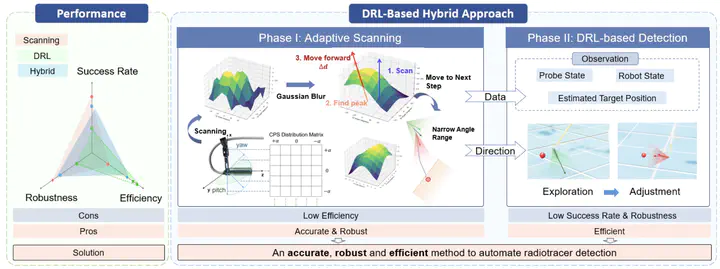

Overview of the proposed DRL-based hybrid approach for radiotracer detection. The method is divided into two phases: Phase I involves adaptive scanning to systematically detect the direction of target and narrow the detection range, while Phase II employs DRL for precise localization of the radiotracer. Phase I provides initial data for DRL to estimate a rough target position for state space at the beginning stage, as well as direction guidance to reduce exploration in DRL. The performance comparison shows that the hybrid method combines the accuracy and robustness of scanning with the efficiency of DRL, resulting in an accurate and efficient approach. The hybrid method achieves a balance between the reliability of classical scanning and the efficiency of DRL.

Overview of the proposed DRL-based hybrid approach for radiotracer detection. The method is divided into two phases: Phase I involves adaptive scanning to systematically detect the direction of target and narrow the detection range, while Phase II employs DRL for precise localization of the radiotracer. Phase I provides initial data for DRL to estimate a rough target position for state space at the beginning stage, as well as direction guidance to reduce exploration in DRL. The performance comparison shows that the hybrid method combines the accuracy and robustness of scanning with the efficiency of DRL, resulting in an accurate and efficient approach. The hybrid method achieves a balance between the reliability of classical scanning and the efficiency of DRL.Abstract

Abstract— Radioguided surgery, such as sentinel lymph node biopsy, relies on the precise localization of radioactive targets by non-imaging gamma/beta detectors. Manual radioactive target detection based on visual display or audible indication of gamma level is highly dependent on the ability of the surgeon to track and interpret the spatial information. This paper presents a learning-based method to realize the autonomous radiotracer detection in robot-assisted surgeries by navigating the probe to the radioactive target. We proposed novel hybrid approach that combines deep reinforcement learning (DRL) with adaptive robotic scanning. The adaptive grid-based scanning could provide initial direction estimation while the DRLbased agent could efficiently navigate to the target utilising historical data. Simulation experiments demonstrate a 95%success rate, and improved efficiency and robustness compared to conventional techniques. Real-world evaluation on the da Vinci Research Kit (dVRK) further confirms the feasibility of the approach, achieving an 80% success rate in radiotracer detection. This method has the potential to enhance consistency, reduce operator dependency, and improve procedural accuracy in radioguided surgeries.

Supplementary notes can be added here, including code, math, and images.